General Knowledge (232)

Live Nation Expands in Latin America with Dale Play Live Acquisition

Written by Sounds SpaceLive Nation Expands in Latin America with Dale Play Live Acquisition: What It Means for the Future of the Live Music Industry

The global live music industry continues to evolve at a rapid pace, and one of the biggest developments in 2026 is Live Nation Entertainment's acquisition of a majority stake in Argentine concert promoter Dale Play Live. The move signals a major commitment to Latin America's booming live entertainment sector and highlights the region's growing importance in the global music business.

As international promoters seek new opportunities beyond North America and Europe, Latin America has emerged as one of the most exciting markets for concerts, festivals, and live music experiences. Despite economic challenges in some countries, fan demand for live events continues to rise, creating significant opportunities for promoters, artists, venues, and investors.

In this article, we'll explore why Live Nation's expansion into Latin America matters, what the Dale Play Live acquisition means for the industry, and how this move could shape the future of live music across the region.

Live Nation Continues Global Expansion

Live Nation Entertainment is the world's largest live entertainment company, operating across concerts, ticketing, artist management, and venue operations. Through its Ticketmaster division and extensive network of venues and promoters, Live Nation has become a dominant force in the global live events business.

Over the past decade, the company has steadily expanded its footprint across international markets. While North America remains its largest market, Live Nation has increasingly focused on high-growth regions where demand for live entertainment is accelerating.

Latin America represents one of the most attractive expansion opportunities. The region boasts a young population, strong music culture, growing urban centers, and increasing demand for international touring artists.

The acquisition of a majority stake in Dale Play Live demonstrates Live Nation's confidence in the long-term growth potential of Latin America's live music economy.

Who Is Dale Play Live?

Dale Play Live is a leading concert promoter based in Argentina and has built a strong reputation within the Latin American music industry.

The company has played a significant role in organizing concerts, tours, and music events featuring both local and international artists. Through its deep understanding of regional audiences and local market dynamics, Dale Play Live has established itself as a key player in South America's entertainment landscape.

By partnering with Dale Play Live, Live Nation gains access to valuable local expertise, established relationships, and operational infrastructure that can help accelerate growth throughout the region.

This combination of global resources and local knowledge creates a powerful partnership capable of delivering larger events and attracting major international tours.

Why Latin America Is Becoming a Music Industry Hotspot

The live music business in Latin America has experienced significant growth in recent years.

Several factors are contributing to this momentum:

Young and Passionate Audiences

Latin America has one of the youngest populations in the world. Music plays a central role in everyday culture, and fans are highly engaged with both local and international artists.

Concert attendance continues to increase as younger generations prioritize live experiences over traditional forms of entertainment.

Streaming Growth Drives Touring Demand

Streaming platforms have helped artists reach audiences across Latin America more effectively than ever before.

As artists build larger fan bases through platforms like Spotify, YouTube, and TikTok, demand for live performances naturally follows. International acts that once visited the region occasionally are now making Latin America a regular stop on global tours.

Growing Festival Culture

Music festivals have become increasingly popular throughout countries such as Argentina, Brazil, Mexico, Colombia, and Chile.

Major events attract hundreds of thousands of attendees annually, creating new opportunities for promoters and sponsors.

The success of these festivals demonstrates the region's appetite for large-scale live entertainment experiences.

Economic Challenges Haven't Stopped Concert Demand

One of the most interesting aspects of Live Nation's investment is that it comes at a time when some Latin American economies continue to face challenges, including inflation, currency fluctuations, and political uncertainty.

Historically, economic instability can impact consumer spending on entertainment. However, recent trends suggest that live music remains a priority for many fans.

Across the world, consumers increasingly view concerts as valuable experiences rather than luxury purchases. This shift has helped fuel the post-pandemic resurgence of live events.

Many industry experts believe the demand for live music is more resilient than previously assumed. Fans are often willing to spend on experiences that create lasting memories, even during uncertain economic conditions.

Live Nation's investment suggests confidence that long-term growth will outweigh short-term economic concerns.

Benefits for Artists Touring Latin America

The acquisition could create numerous opportunities for artists looking to expand their presence in the region.

With Live Nation's global infrastructure combined with Dale Play Live's local expertise, artists may benefit from:

- Improved tour logistics

- Expanded venue access

- Larger promotional campaigns

- Better audience targeting

- Increased touring opportunities

- Enhanced production capabilities

Major international artists could find it easier to organize extensive Latin American tours, while regional artists may gain access to larger platforms and broader audiences.

This increased connectivity between global and regional markets could help elevate Latin America's position within the worldwide touring ecosystem.

What This Means for the Concert Industry

The deal reflects a broader trend within the live entertainment business.

Major promoters are increasingly seeking strategic acquisitions and partnerships to strengthen their positions in emerging markets. Rather than building operations from scratch, companies are partnering with established local promoters who understand regional audiences and business practices.

This strategy allows companies like Live Nation to scale more efficiently while maintaining strong local connections.

For the industry as a whole, this could lead to:

More International Tours

Artists may include additional Latin American dates in future tour schedules.

Larger Event Productions

Global investment often brings higher production standards and larger-scale events.

Increased Competition

Other promoters may accelerate investments across Latin America to remain competitive.

New Opportunities for Local Talent

Regional artists could gain exposure through larger events and international partnerships.

The Future of Live Music in Latin America

The future appears bright for Latin America's live music industry.

The region continues to demonstrate strong audience engagement, increasing concert attendance, and growing interest from global entertainment companies.

As infrastructure improves and more international investment enters the market, Latin America is likely to become an even more important destination for touring artists and major music events.

The Live Nation-Dale Play Live partnership may ultimately serve as a catalyst for further growth across the region.

Whether through stadium concerts, music festivals, arena tours, or emerging artist showcases, the opportunities for expansion appear significant.

For fans, this could mean access to more events, bigger productions, and a wider variety of artists performing throughout the region.

For the music industry, it reinforces a growing reality: Latin America is no longer simply an emerging market—it's becoming one of the most important drivers of global live music growth.

Conclusion

Live Nation's acquisition of a majority stake in Dale Play Live represents more than just a business transaction. It is a clear signal that Latin America has become a strategic priority within the global live entertainment industry.

By combining Live Nation's worldwide reach with Dale Play Live's regional expertise, the partnership is positioned to capitalize on growing demand for concerts and live experiences across the region.

As the music industry continues to evolve, investments like this highlight the increasing importance of live events and the growing influence of Latin American audiences on the global stage.

For artists, promoters, venues, and fans alike, the future of live music in Latin America looks more exciting than ever.

Italy Cancels Kanye West and Travis Scott Concerts After Protests and Controversy

Written by Sounds SpaceItaly Cancels Kanye West and Travis Scott Concerts After Controversy and Protests

Italy Pulls the Plug on Kanye West and Travis Scott Concerts

Italy has reportedly canceled planned performances involving hip-hop superstars Kanye West and Travis Scott following widespread controversy, public protests, and growing concerns from local authorities. The decision has sparked intense debate among music fans, free speech advocates, cultural organizations, and local communities.

The cancellation marks another chapter in the ongoing scrutiny surrounding Kanye West, whose public statements and behavior over the past several years have generated significant backlash globally. Combined with concerns related to Travis Scott's live events and crowd management controversies, Italian officials faced mounting pressure from activists and residents who opposed hosting the artists.

As news of the cancellations spread, social media platforms erupted with mixed reactions. Some supporters argued that artists should be separated from their controversies, while critics praised the decision as a responsible move by organizers and local authorities.

Why Were the Concerts Canceled?

The primary reasons behind the cancellations stem from public opposition, political pressure, and concerns about the artists' reputations.

Kanye West has remained one of the most controversial figures in modern music. While widely recognized as one of hip-hop's most influential artists and producers, his public comments in recent years have led to widespread criticism from governments, corporations, media outlets, and advocacy groups.

Several Italian organizations reportedly voiced concerns about providing a platform for an artist whose statements have repeatedly generated international controversy. Protest groups argued that hosting such events could send the wrong message and potentially damage the reputation of the cities involved.

Meanwhile, Travis Scott has continued to face public scrutiny following previous concerns related to concert safety and crowd management. Although the rapper remains one of the world's most successful touring artists, critics questioned whether large-scale events featuring controversial performers were appropriate amid heightened public sensitivity.

The combination of these factors ultimately contributed to organizers reevaluating the planned performances.

Public Protests Played a Major Role

Public demonstrations and online campaigns were instrumental in influencing the outcome.

Activists organized petitions, social media campaigns, and public demonstrations calling for authorities to reconsider the concerts. Many protesters argued that public venues should prioritize artists who promote positive social values and community engagement.

Residents in several regions expressed concerns about potential disruptions, security challenges, and the broader cultural implications of hosting events involving highly polarizing figures.

The growing momentum of these campaigns placed additional pressure on event organizers, sponsors, and local governments. In today's digital age, public sentiment can significantly influence major entertainment decisions, and this situation proved no exception.

The controversy highlights how public opinion increasingly shapes the live music industry, particularly when artists become associated with political or social debates.

Impact on Kanye West's International Touring Plans

The cancellation represents another setback for Kanye West's international performance ambitions.

Despite ongoing controversy, Kanye remains one of the most influential figures in music history. Albums such as The College Dropout, Graduation, and My Beautiful Dark Twisted Fantasy continue to be celebrated by fans and critics alike.

However, his public image has undergone a significant transformation in recent years. Multiple brands, business partners, and event organizers have distanced themselves from the artist following controversial statements and actions.

As a result, securing venues, sponsorships, and international partnerships has become increasingly complicated. The Italian cancellations may signal that promoters are becoming more cautious when evaluating potential events involving high-profile but controversial performers.

For fans hoping to see Kanye perform live in Europe, these developments create uncertainty regarding future tour announcements.

Travis Scott Faces Another Challenge

Although much of the controversy centered around Kanye West, Travis Scott has also found himself drawn into the discussion.

Known for chart-topping albums like Astroworld and Utopia, Travis Scott remains one of the most commercially successful artists in modern hip-hop.

His concerts are famous for their high energy, elaborate production, and massive fan attendance. However, discussions surrounding concert safety have followed the artist since previous incidents at major live events.

While supporters argue that Travis Scott has worked to improve safety measures and event management practices, critics continue to raise concerns whenever large-scale performances are announced.

The Italian situation demonstrates how past controversies can continue influencing public perception and event planning decisions years later.

The Growing Influence of Public Accountability in Music

The cancellations reflect a broader trend within the global entertainment industry.

Artists today operate in an environment where public scrutiny extends far beyond their music. Statements made online, interviews, social media activity, and personal behavior can significantly impact career opportunities.

Promoters, sponsors, venue operators, and government officials increasingly consider reputational risks when organizing major events. Public backlash can affect ticket sales, sponsorship agreements, media coverage, and overall event success.

This shift has created new challenges for artists navigating the balance between artistic freedom and public accountability.

Supporters of the cancellations argue that communities have the right to decide which public events align with their values. Critics, however, warn that such decisions may contribute to growing restrictions on artistic expression.

The debate remains complex and highly polarizing.

Fan Reactions Are Divided

Not surprisingly, reactions from fans have been mixed.

Many Kanye West supporters expressed disappointment, arguing that music should be judged separately from political or personal controversies. Some fans accused authorities of censorship and claimed that audiences should be free to decide whether to attend performances.

Others welcomed the cancellations, believing that public figures should face consequences for controversial actions and statements.

Similarly, Travis Scott fans voiced frustration that the rapper became entangled in broader controversies despite maintaining a successful touring career.

The passionate responses demonstrate the powerful connection fans maintain with artists, even amid public criticism and controversy.

What This Means for the Future of Live Music

The cancellation of Kanye West and Travis Scott concerts in Italy could have implications beyond a single event.

Promoters around the world are increasingly aware of the reputational risks associated with booking controversial performers. Public campaigns can quickly gain momentum online, forcing organizers to reconsider previously approved events.

At the same time, artists continue to enjoy strong fan support despite criticism, creating difficult decisions for event planners attempting to balance public demand with community concerns.

The situation highlights a larger transformation within the entertainment industry, where cultural conversations, social responsibility, and public perception play a greater role than ever before.

Conclusion

Italy's decision to cancel planned concerts involving Kanye West and Travis Scott reflects the growing influence of public opinion in today's music industry. While supporters view the move as a necessary response to ongoing controversies, critics argue it raises important questions about artistic freedom and censorship.

Regardless of where individuals stand on the issue, the cancellations underscore a reality facing modern artists: public perception can significantly impact opportunities, partnerships, and live performances.

As the music industry continues to evolve, the intersection of entertainment, social responsibility, and public accountability will remain a defining topic for artists, promoters, and fans worldwide.

deadmau5 Sells Signed Synths and Studio Gear on Reverb + Exclusive mau5head Giveaway

Written by Sounds Spacedeadmau5 Is Selling Signed Synths, Studio Gear, and Giving Away a Space-Themed mau5head through Reverb

Electronic Music Fans Have a Rare Opportunity to Own a Piece of deadmau5 History

Electronic music producer deadmau5 is opening the doors to his creative universe once again. Through a special sale on Reverb, the legendary producer, DJ, and technology enthusiast is offering fans the chance to purchase signed synthesizers, modular equipment, studio gear, and other unique pieces from his personal collection.

As if that wasn't exciting enough, one lucky buyer will also receive a one-of-a-kind space-themed mau5head that was previously used during performances in Las Vegas.

For music producers, synth collectors, deadmau5 fans, and electronic music enthusiasts, this is more than just another gear sale. It is a rare opportunity to own equipment directly connected to one of the most influential figures in modern electronic music.

The announcement has generated significant buzz across the music production community, with many eager to discover which iconic pieces of gear are being made available and what stories they might hold.

Who Is deadmau5?

Before diving into the sale itself, it's worth understanding why this event has attracted so much attention.

Born Joel Zimmerman, deadmau5 has become one of the most recognizable names in electronic dance music. Known for his signature mau5head helmet and groundbreaking productions, he has spent nearly two decades shaping the EDM landscape.

His extensive catalog includes globally recognized tracks such as:

- Ghosts 'n' Stuff

- Strobe

- Raise Your Weapon

- The Veldt

- Monophobia

- Some Chords

Beyond his music, deadmau5 has earned a reputation as one of the industry's most passionate technology and synthesizer enthusiasts.

His studio setups are legendary, often featuring rare vintage synthesizers, cutting-edge modular systems, custom-built workstations, and unique electronic instruments.

Because of this reputation, any opportunity to acquire gear directly from his collection instantly becomes major news within the music production world.

Why Producers Love deadmau5's Studio Setup

One reason this Reverb sale has generated so much excitement is deadmau5's long-standing reputation as a gear enthusiast.

Unlike many producers who rely primarily on software, deadmau5 has consistently invested in high-end hardware instruments and studio equipment.

Over the years, fans have watched countless studio tours showcasing rooms filled with:

- Analog synthesizers

- Modular Eurorack systems

- Vintage keyboards

- High-end monitoring systems

- Professional mixing equipment

- Custom-built electronic devices

His studio has often been described as one of the most impressive electronic music production environments in the world.

For producers, owning a piece of equipment that contributed to the creative process behind some of electronic music's most influential productions carries tremendous appeal.

What's Included in the Reverb Sale?

The sale features a carefully selected collection of gear from deadmau5's personal inventory.

Among the most sought-after items are signed synthesizers, including a signed microKORG that is expected to attract significant attention from collectors.

The sale also includes various pieces of studio hardware and modular gear that have played a role in Zimmerman’s creative workflow over the years.

Potential buyers can expect to find:

Signed Synthesizers

Signed instruments often become collector's items, especially when associated with influential artists.

For fans, a signed synthesizer represents both a practical studio tool and a unique piece of music history.

Modular Equipment

Modular synthesis has experienced explosive growth over the past decade, and deadmau5 has long been an advocate of modular systems.

Many producers are particularly excited about the possibility of acquiring modules directly from his setup.

Studio Hardware

Additional equipment may include processors, controllers, interfaces, and various production tools used throughout his career.

Rare Collectibles

Some pieces are expected to have value beyond their technical functionality due to their direct connection to the artist.

This combination of utility and collectibility makes the sale especially attractive.

The Space-Themed mau5head Giveaway

Perhaps the most exciting element of the promotion is the inclusion of a space-themed mau5head giveaway.

The mau5head has become one of the most recognizable symbols in electronic music culture.

Over the years, deadmau5 has worn countless variations of the iconic helmet, each representing different creative eras and performance concepts.

The space-themed version being offered reportedly appeared during performances in Las Vegas, adding another layer of significance for collectors.

For many fans, owning a genuine mau5head used in a professional performance would be the ultimate piece of deadmau5 memorabilia.

The giveaway adds an extra level of excitement to the Reverb campaign and is likely to drive substantial participation from fans worldwide.

Why Artists Sell Their Studio Gear

Some fans may wonder why successful artists choose to part with equipment from their personal collections.

In reality, gear turnover is extremely common among professional musicians and producers.

Technology evolves rapidly, and creative needs change over time.

Artists frequently:

- Upgrade equipment

- Reorganize studios

- Simplify workflows

- Experiment with new technologies

- Make room for future acquisitions

For deadmau5, who has always embraced innovation, selling older gear likely creates opportunities for new creative exploration.

Additionally, gear sales allow fans to connect directly with the artist's creative journey.

The Growing Market for Artist-Owned Gear

The demand for artist-owned equipment has increased dramatically in recent years.

Collectors and producers are increasingly interested in acquiring gear that carries a story.

Ownership history can significantly increase an item's desirability.

Previous sales involving equipment from major artists have often attracted global attention, with some items selling for substantially more than their original retail prices.

Factors that drive value include:

- Artist ownership

- Signature authentication

- Historical significance

- Studio usage

- Rarity

- Condition

deadmau5's gear checks several of these boxes simultaneously, making the collection particularly appealing.

Why Reverb Has Become the Go-To Marketplace

The sale is taking place through Reverb, one of the world's largest marketplaces for musical instruments and professional audio equipment.

Over the years, Reverb has become a preferred destination for artists looking to sell equipment directly to fans.

The platform offers:

- Global reach

- Secure transactions

- Verified listings

- Detailed product information

- Strong community engagement

Several major artists have partnered with Reverb to sell personal gear collections, turning these sales into highly anticipated events.

The platform's ability to connect artists directly with musicians and collectors makes it an ideal venue for projects like this.

What This Means for Music Producers

Beyond the collector appeal, the sale also offers valuable inspiration for producers.

Many aspiring musicians spend years studying the tools and workflows used by successful artists.

Seeing the types of equipment deadmau5 has chosen to use provides insight into his production philosophy.

It reinforces an important lesson:

Great music comes from creativity, experimentation, and skill—not simply from owning expensive gear.

While the equipment itself is fascinating, the real value lies in understanding how artists use technology to bring ideas to life.

The Legacy of deadmau5 in Music Technology

Few electronic artists have had as much influence on music technology culture as deadmau5.

Throughout his career, he has been an outspoken advocate for innovation, transparency, and technical excellence.

His influence extends far beyond music production and includes:

- Studio design

- Sound engineering

- Live performance technology

- Synthesizer culture

- Modular synthesis communities

- Creative workflow development

Because of this broader impact, even a simple gear sale becomes a major event within the music technology world.

Fans are not just purchasing equipment—they are buying a tangible connection to one of electronic music's most influential creative minds.

Collecting Pieces of Electronic Music History

Music memorabilia has evolved dramatically over the years.

While collectors once focused primarily on guitars and rock-and-roll artifacts, electronic music fans now seek equipment that played a role in shaping modern dance music culture.

Items associated with pioneering producers often become historical artifacts in their own right.

As electronic music continues to mature as a genre, interest in preserving its history continues to grow.

Sales like this help document and celebrate the technological side of music creation.

Conclusion

deadmau5's Reverb sale offers fans and producers an extraordinary opportunity to own pieces of electronic music history.

From signed synthesizers and modular equipment to rare studio gear and the highly coveted space-themed mau5head giveaway, the event showcases the unique intersection of music, technology, and fan culture.

For collectors, the sale presents the chance to acquire authentic items connected to one of EDM's most influential artists.

For producers, it offers insight into the tools that helped shape some of the most iconic electronic music of the modern era.

Whether you're a lifelong deadmau5 fan, a synth enthusiast, or a professional music producer, this Reverb event represents a rare opportunity to bring a piece of Joel Zimmerman's creative universe into your own studio.

And judging by the excitement already surrounding the sale, these pieces of gear won't stay available for long.

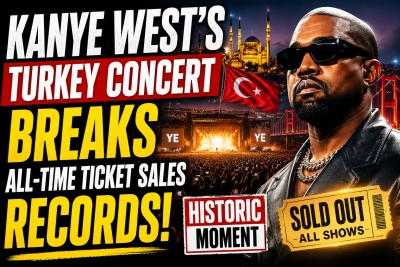

Kanye West's Turkey Concert Breaks All-Time Ticket Sales Records in 2026

Written by Sounds SpaceKanye West's Turkey Concert Breaks All-Time Ticket Sales Records

The Historic Concert That Shook the Global Music Industry

In a stunning development that has captivated the global music industry, Kanye West's highly anticipated concert in Turkey has officially broken all-time ticket-selling records. The event, which drew unprecedented demand from fans across Europe, Asia, the Middle East, and beyond, has become one of the most talked-about live music events of 2026.

Known for pushing boundaries in music, fashion, and entertainment, Kanye West has once again demonstrated his extraordinary ability to command global attention. Despite years of controversy, public scrutiny, and unpredictable career moves, the artist has proven that his influence remains stronger than ever.

The record-breaking success of the Turkey concert highlights not only Kanye's enduring star power but also Turkey's growing status as a major destination for international live entertainment.

A Record-Breaking Achievement

According to industry reports, ticket sales for Kanye West's Turkey concert surpassed expectations within hours of release. Fans flooded online ticketing platforms, creating virtual queues that stretched into the hundreds of thousands.

The overwhelming demand resulted in:

- Record-breaking ticket sales in the first 24 hours.

- Ticket platforms experiencing heavy traffic and temporary outages.

- International fans booking flights and accommodation immediately after securing tickets.

- Secondary market prices skyrocketing due to demand.

Industry analysts have described the event as one of the fastest-selling concerts ever recorded, placing it alongside some of the largest global live events in music history.

For Turkey's entertainment sector, the achievement represents a landmark moment that could reshape how international artists view the country's concert market.

Why Kanye West Remains One of the Biggest Names in Music

Many industry observers have questioned how Kanye West continues to generate such enormous demand after more than two decades in the spotlight.

The answer lies in his unique combination of artistry, controversy, innovation, and cultural relevance.

Since emerging as a producer in the early 2000s, Kanye has consistently reinvented himself. Albums such as:

- The College Dropout

- Late Registration

- Graduation

- 808s & Heartbreak

- My Beautiful Dark Twisted Fantasy

- Yeezus

- Donda

have influenced multiple generations of artists across hip-hop, pop, electronic music, and even rock.

His ability to remain culturally relevant while constantly evolving has allowed him to maintain a fanbase that spans age groups, countries, and musical genres.

The Turkey concert demonstrates that Kanye's global appeal remains virtually unmatched.

Turkey Emerges as a Global Concert Destination

The success of the event also highlights Turkey's rapidly growing importance in the international entertainment market.

Over the past decade, Turkey has increasingly attracted major artists due to its:

- Strategic location between Europe and Asia.

- Modern stadium infrastructure.

- Expanding tourism sector.

- Young and music-loving population.

- Strong international travel connections.

Cities such as Istanbul have become major hubs for global entertainment, attracting millions of visitors annually.

The massive demand for Kanye West's concert could encourage promoters and booking agencies to bring even more superstar artists to Turkey in the coming years.

Industry insiders believe this concert may serve as a turning point for the country's live events sector.

International Fans Flock to Turkey

One of the most remarkable aspects of the ticket sales phenomenon has been the international audience.

Fans reportedly purchased tickets from:

- United Kingdom

- Germany

- France

- Italy

- Spain

- United States

- Saudi Arabia

- United Arab Emirates

- Qatar

- Egypt

- Azerbaijan

- Kazakhstan

and many other countries.

The event has effectively transformed into a global gathering of Kanye West supporters.

Travel agencies have already reported increased bookings, while hotels and local businesses are preparing for a significant economic boost surrounding the concert weekend.

This international demand demonstrates the power of music tourism and its growing impact on local economies.

The Social Media Explosion

As tickets became available, social media platforms exploded with reactions from fans.

Hashtags related to the concert quickly began trending across:

- X

- TikTok

- YouTube

Thousands of users shared screenshots of successful purchases, countdown posts, travel plans, and excitement about attending the event.

Fan communities dedicated to Kanye West experienced record engagement levels as supporters discussed stage design predictions, possible guest appearances, and potential setlists.

The social media buzz has only amplified interest in the event, creating a cycle of publicity that continues to drive global attention.

What Fans Can Expect From the Concert

Although official details remain limited, expectations for the show are extraordinarily high.

Kanye West has built a reputation for creating concert experiences that go far beyond traditional live performances.

Past tours have featured:

- Massive custom-built stages.

- Innovative visual effects.

- Advanced lighting technology.

- Theatrical storytelling.

- Groundbreaking production design.

Many fans expect the Turkey concert to include a career-spanning setlist featuring hits from multiple eras of his catalog.

Potential performances could include fan favorites such as:

- Stronger

- Gold Digger

- Heartless

- Power

- Runaway

- All of the Lights

- Bound 2

- Hurricane

Given Kanye's reputation for surprises, special guests and unexpected performances remain a possibility.

The Economic Impact of the Event

Beyond music, the concert is expected to generate significant economic benefits for Turkey.

Large-scale entertainment events create a ripple effect across numerous industries.

Expected beneficiaries include:

Hospitality Sector

Hotels, resorts, and short-term rental providers are already experiencing increased demand.

Transportation Industry

Airlines, airports, ride-sharing services, and public transportation networks are expected to see substantial increases in passenger traffic.

Restaurants and Retail

Visitors attending the event will contribute additional spending at local restaurants, cafes, shopping centers, and tourist attractions.

Tourism Industry

Many international attendees are extending their stays, combining the concert experience with broader tourism activities throughout Turkey.

Economic analysts estimate that the overall financial impact could reach tens of millions of dollars.

A New Era for Live Music Events

The record-breaking success of Kanye West's Turkey concert also reflects broader changes within the global concert industry.

Since the pandemic era, live music has experienced an unprecedented resurgence.

Fans are increasingly willing to:

- Travel internationally for concerts.

- Purchase premium ticket packages.

- Attend destination music events.

- Spend more on exclusive experiences.

Major artists now view live performances as one of the most important aspects of their careers.

Concerts have evolved into large-scale cultural events that combine entertainment, tourism, fashion, and social media engagement.

Kanye's Turkey concert perfectly represents this new reality.

Why Demand Was So Extraordinary

Several factors contributed to the historic ticket demand.

Scarcity

Kanye West does not perform as frequently as many other major artists. Limited opportunities naturally increase demand.

Global Fanbase

Few artists possess such a diverse international audience.

Curiosity Factor

Every Kanye event carries an element of unpredictability, making each performance feel unique.

Cultural Significance

The concert has been marketed as more than just a live show. It has become a major cultural moment.

Destination Appeal

Turkey's popularity as a travel destination has encouraged many fans to combine entertainment with tourism.

Together, these factors created the perfect conditions for record-breaking sales.

Industry Reactions

Music executives, promoters, and entertainment analysts have been quick to comment on the achievement.

Many industry experts believe the event confirms that superstar artists continue to possess immense drawing power despite changing music consumption habits.

Streaming may dominate everyday listening, but live experiences remain irreplaceable.

Industry observers also note that the success reinforces the value of event exclusivity. When artists create rare and highly anticipated performances, demand can reach extraordinary levels.

For concert promoters worldwide, Kanye's Turkey concert may become a case study in event marketing and audience engagement.

The Future of Major Concert Events in Turkey

The success of this event could have long-term implications for Turkey's entertainment landscape.

Promoters may now pursue:

- More stadium concerts.

- International music festivals.

- Exclusive artist residencies.

- Multi-day entertainment experiences.

- Cross-border tourism partnerships.

Global artists and management teams are likely paying close attention to the results.

If future events replicate this success, Turkey could become one of the most important concert markets in Europe and the Middle East.

Kanye West Continues to Defy Expectations

Throughout his career, Kanye West has repeatedly challenged conventional wisdom.

Whether through groundbreaking albums, fashion ventures, creative risks, or headline-generating moments, he has consistently remained at the center of popular culture.

The record-breaking ticket sales for his Turkey concert serve as yet another reminder of his unique ability to capture public attention.

While opinions about Kanye West may remain divided, the numbers tell a clear story: audiences around the world are still eager to see him perform.

Conclusion

Kanye West's Turkey concert has officially entered music history by breaking all-time ticket-selling records and generating unprecedented global interest.

The event showcases the enduring power of live music, the continued influence of one of the world's most recognizable artists, and Turkey's emergence as a major international entertainment destination.

With fans traveling from across the globe, social media buzzing with excitement, and economic benefits expected to ripple throughout the region, the concert represents far more than a single performance.

It is a cultural phenomenon.

As the concert date approaches, anticipation continues to build, and one thing is certain: Kanye West has once again proven that when it comes to commanding worldwide attention, few artists can compete with his reach, influence, and ability to create history.

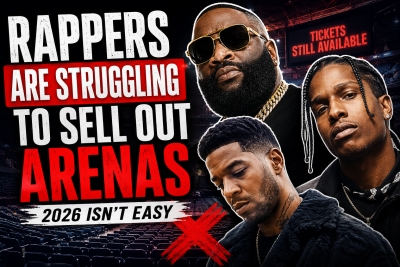

Why Rappers Are Struggling to Sell Out Arenas in 2026

Written by Sounds SpaceWhy Rappers Are Struggling to Sell Out Arenas in 2026

The New Reality of Hip-Hop Touring

For decades, hip-hop has been one of the most dominant genres in the music industry. From sold-out stadiums to billion-stream artists, rap music has consistently shaped popular culture and generated massive revenue for artists, labels, and promoters alike.

However, 2026 has revealed an uncomfortable truth for many major rap artists: selling out arenas is becoming increasingly difficult.

From Rick Ross and A$AP Rocky to Kid Cudi and several other well-known names, many rappers are finding it harder than ever to fill large venues. Tours are seeing discounted tickets, venue changes, postponed dates, and in some cases, outright cancellations.

The question is simple: Why are rappers struggling to sell out arenas in 2026?

The answer is far more complex than declining popularity. Instead, it reflects a major shift in consumer behavior, ticket pricing, streaming culture, and the changing relationship between artists and fans.

The Arena Touring Model Is Becoming Harder to Sustain

The traditional arena model was built around one core principle: massive demand.

Artists would release a hit album, build anticipation, and then capitalize on that momentum through large-scale tours. Fans had fewer entertainment options and were often willing to spend significant money to see their favorite performers live.

Today, that formula is breaking down.

Fans are facing economic pressures worldwide, including rising living costs, housing expenses, and inflation. Concert tickets are no longer viewed as affordable entertainment for many households.

A single arena concert can easily cost:

- $100–$300 for standard tickets

- $50–$100 for parking

- $20–$50 for food and drinks

- Additional travel and accommodation costs

For many fans, attending one concert can cost several hundred dollars.

As a result, consumers are becoming more selective about which artists they are willing to spend money on.

Streaming Success Does Not Equal Ticket Sales

One of the biggest misconceptions in today's music industry is that streaming numbers automatically translate into concert demand.

This simply isn't true.

An artist may generate hundreds of millions of streams while maintaining a relatively passive audience. Many listeners consume music through playlists, algorithmic recommendations, and social media snippets without developing a deep connection to the artist.

In previous generations, fans often purchased full albums and followed artists closely throughout their careers.

Today's listeners frequently engage with individual songs rather than entire artist brands.

This creates a major problem for arena tours.

Just because a rapper has a viral hit on Spotify, TikTok, or YouTube does not mean thousands of people are willing to spend hundreds of dollars to see them perform live.

The gap between digital popularity and real-world demand has never been wider.

The Rise of Festival Culture

Another major challenge facing rappers is the growing popularity of music festivals.

Many fans now prefer festivals over standalone arena shows because they offer greater value.

Instead of paying premium prices to watch one artist perform for 90 minutes, consumers can attend a festival and see dozens of artists across multiple stages.

Major festivals continue to attract huge audiences because they provide:

- Multiple headliners

- Diverse music genres

- Social experiences

- Better perceived value

- Unique content for social media

For younger audiences, festivals often feel more exciting than traditional arena concerts.

This trend is especially affecting mid-tier rap artists who previously relied on arena touring revenue.

Oversaturation Is Hurting Demand

The music industry is more crowded than ever.

Every day, thousands of new songs are uploaded to streaming platforms.

Social media has created an environment where artists can achieve viral success almost overnight.

While this has created opportunities, it has also led to oversaturation.

Fans have more choices than ever before.

Instead of focusing on a handful of superstar artists, audiences now divide their attention across hundreds of musicians, influencers, content creators, gamers, and entertainers.

The competition for attention is relentless.

As a result, maintaining long-term fan loyalty has become significantly harder.

Arena Ticket Prices Have Reached a Breaking Point

Perhaps the biggest factor impacting arena attendance is ticket pricing.

Many fans simply believe concerts have become too expensive.

Dynamic pricing systems have pushed ticket costs to unprecedented levels.

While these systems help maximize revenue, they often alienate core fans.

When consumers see ticket prices fluctuating dramatically or reaching premium levels, many choose not to attend at all.

Fans increasingly ask themselves:

"Do I really want to spend $300 to see this artist?"

For many rappers, the answer from consumers is becoming "no."

Artists who were capable of selling out arenas five years ago may no longer generate enough demand to justify those ticket prices.

Social Media Has Changed Fan Relationships

Social media was once considered a powerful promotional tool for artists.

While it still plays an important role, it has also created unexpected challenges.

Fans now have unprecedented access to artists through:

- TikTok

- YouTube

- Twitch

- Podcasts

- Livestreams

As a result, the exclusivity that once surrounded celebrities has diminished.

In previous decades, attending a concert was one of the few opportunities fans had to experience their favorite artist.

Today, fans can watch hours of content from artists without ever purchasing a ticket.

This constant accessibility has reduced some of the urgency surrounding live events.

Hip-Hop Is Facing Increased Competition

Although hip-hop remains one of the world's most influential genres, it is no longer the uncontested leader it once appeared to be.

Several genres are experiencing significant growth, including:

Country Music

Country artists continue to dominate touring revenues and frequently sell out stadiums and arenas.

Fans of country music often demonstrate exceptionally high loyalty and stronger ticket-buying habits.

Electronic Dance Music (EDM)

EDM festivals and events continue to thrive globally.

The community aspect of electronic music creates highly engaged audiences willing to travel long distances for live experiences.

Latin Music

Latin artists are experiencing unprecedented international growth.

Their concerts often attract passionate fan bases that consistently support live performances.

K-Pop

K-pop remains one of the strongest touring genres in the world.

Fan communities are highly organized and extremely committed to supporting artists both online and offline.

As competition intensifies, rap artists must work harder to attract consumer spending.

Some Artists Have Outgrown Their Peak Commercial Momentum

This is often the most uncomfortable reality.

Not every artist maintains the same level of demand throughout their career.

Artists such as Rick Ross, Kid Cudi, and others have built legendary catalogs and contributed enormously to hip-hop culture.

However, commercial demand naturally evolves.

New generations of listeners emerge.

Musical trends shift.

Consumer interests change.

While legacy artists can still generate significant audiences, filling a 15,000–20,000-seat arena requires extraordinary demand.

Sometimes the market simply no longer supports venues of that size.

This does not diminish an artist's impact or legacy.

It simply reflects changing consumer behavior.

Fans Are Prioritizing Experiences Differently

Younger consumers are spending money differently from previous generations.

Many prioritize:

- Travel

- Experiences

- Gaming

- Technology

- Subscription services

- Fitness memberships

- Content creation equipment

Concerts now compete with countless other entertainment options.

The average consumer's entertainment budget is being divided among multiple platforms and experiences.

This means artists must provide compelling reasons for fans to choose a concert over everything else.

The Future of Hip-Hop Touring

Despite current challenges, hip-hop touring is far from dead.

Instead, the industry is entering a new phase.

Successful artists are adapting by:

Choosing Smaller Venues

Many artists are discovering that smaller venues create:

- Better atmosphere

- Higher demand

- Stronger fan engagement

- Easier sell-outs

A sold-out 5,000-capacity venue often generates more excitement than a half-empty arena.

Creating Premium Experiences

VIP packages, meet-and-greets, exclusive merchandise, and immersive fan experiences are becoming increasingly important revenue streams.

Building Stronger Communities

Artists who actively engage with fans throughout the year tend to maintain stronger touring performances.

Community-building is becoming just as important as releasing music.

Leveraging Direct-to-Fan Marketing

Email lists, fan clubs, membership platforms, and exclusive content are helping artists reduce dependence on algorithms and strengthen relationships with supporters.

The Arena Era Isn't Over, But It's Changing

The struggles faced by artists such as Rick Ross, A$AP Rocky, Kid Cudi, and others do not indicate that hip-hop is declining.

Instead, they reveal a changing music industry.

Streaming has transformed consumption habits.

Ticket prices have reached uncomfortable levels.

Fans have more entertainment choices than ever before.

Social media has altered artist-fan relationships.

And economic realities are forcing consumers to spend more carefully.

Arena tours remain possible, but they increasingly require exceptional demand, strategic pricing, powerful fan engagement, and a compelling live experience.

The artists who adapt to these new realities will continue to thrive.

Those relying on outdated touring models may find that selling out arenas in 2026 is far more difficult than it was just a few years ago.

As the music business evolves, one thing is becoming clear: popularity on a screen does not always translate into people filling seats inside an arena.

Spotify Expands Its Superfan Strategy with AI Tools, Exclusive Content & Fan Subscriptions

Written by Sounds SpaceReason 14 Officially Released: New Features, Workflow Updates & More

Written by Sounds SpaceUniversal Music Group and TikTok Sign New Multi-Year Licensing Deal in 2026

Written by Sounds SpaceUniversal Music Group and TikTok Sign New Multi-Year Licensing Deal: What It Means for Artists, Fans, and the Music Industry

UMG and TikTok Rebuild Their Relationship After a Turbulent Split

Universal Music Group and TikTok have officially announced a brand-new multi-year licensing agreement, marking a major turning point for the music industry after months of public tension between the two giants.

According to the official announcement, the deal will provide UMG artists with expanded promotional opportunities on TikTok, including enhanced marketing and advertising campaigns, ecommerce integrations, and a range of artist-focused tools designed to help musicians grow their audiences and generate more revenue.

The announcement arrives roughly two years after one of the most dramatic disputes in modern music streaming history. In early 2024, UMG removed its entire catalog of master recordings and compositions from TikTok following disagreements surrounding artist compensation, artificial intelligence concerns, and platform safety issues.

Now, with this new agreement in place, the relationship between the world’s largest music company and one of the most influential social media platforms appears stronger than ever.

Why Universal Music Group Pulled Its Music From TikTok in 2024

Back in January 2024, UMG shocked the music world when it allowed its licensing agreement with TikTok to expire. As negotiations collapsed, songs from some of the world’s biggest artists disappeared from the platform almost overnight.

That meant users could no longer use music from artists associated with UMG, including major global stars across pop, hip-hop, rock, dance, and country music.

The dispute centered around several major issues:

1. Artist Compensation

UMG argued that TikTok was underpaying artists and songwriters despite the platform’s massive influence on music discovery and viral success.

TikTok had become one of the biggest drivers of streaming culture, with countless songs reaching the charts after trending on the app. However, UMG believed the financial returns for artists did not reflect TikTok’s cultural power and advertising revenue.

2. Artificial Intelligence Concerns

Another key issue involved the rapid rise of AI-generated music.

UMG publicly criticized the spread of unauthorized AI songs that mimicked famous artists. The company expressed concerns about deepfake vocals, copyright abuse, and the potential devaluation of human creativity.

At the time, UMG accused TikTok of failing to properly protect artists from AI-generated content that could harm both reputations and revenue streams.

3. Platform Safety and Content Moderation

UMG also raised concerns regarding online harassment, harmful content, and moderation standards on TikTok.

The company wanted stronger protections for artists and fans while ensuring music was not being used alongside damaging or inappropriate content.

The dispute created major disruption across TikTok, especially for creators whose videos suddenly lost audio due to removed tracks.

The Importance of TikTok in Modern Music Promotion

Despite the conflict, both companies understood one major reality: TikTok has become one of the most powerful music discovery platforms in the world.

In today’s music industry, viral success on TikTok can completely transform an artist’s career within days.

Songs that trend on the platform frequently dominate streaming charts on services like Spotify and Apple Music shortly afterward.

TikTok trends influence:

- Billboard chart performance

- Spotify playlist placements

- Concert ticket sales

- Music downloads and streams

- Fan engagement

- Merchandise purchases

- Cultural conversations

Independent artists have also benefited enormously from TikTok’s algorithm, which allows unknown musicians to reach millions of users organically without traditional label backing.

Because of this, TikTok is no longer viewed as just a social media app. It is now considered a central pillar of global music marketing strategy.

What the New UMG and TikTok Deal Includes

The newly announced multi-year agreement appears designed to address many of the concerns raised during the 2024 dispute.

According to the press release, the partnership will offer several new benefits for UMG artists and songwriters.

Expanded Marketing and Advertising Campaigns

One of the biggest features of the agreement is the promise of enhanced marketing opportunities for artists.

This likely means TikTok and UMG will work more closely together on:

- Official artist campaigns

- Sponsored promotions

- Album release rollouts

- Viral challenge strategies

- Fan engagement campaigns

- Influencer collaborations

- Livestream events

These tools could help artists maximize visibility across TikTok’s enormous global audience.

For labels, this partnership creates new ways to launch music releases more effectively in an era where short-form video dominates online attention.

E-commerce and Artist Monetization Tools

Another major component of the deal involves e-commerce integration.

TikTok has increasingly expanded into online shopping and direct monetization features over the past few years. The new agreement suggests UMG artists will gain greater access to tools that allow them to sell products directly through the platform.

This could include:

- Merchandise sales

- Vinyl and CD bundles

- Concert tickets

- Exclusive digital content

- Artist subscriptions

- Limited edition drops

The integration of e-commerce into music promotion reflects a growing industry trend where artists rely on multiple income streams beyond traditional streaming royalties.

Artist-Centric Features Could Redefine Fan Engagement

The press release also references “artist-centric tools,” a phrase that could have significant implications for the future of music promotion.

These tools may include advanced analytics, audience targeting systems, AI-powered fan insights, and better ways for artists to connect directly with followers.

TikTok’s data ecosystem is incredibly powerful. By combining music trends with user behavior insights, labels and artists can make smarter marketing decisions in real time.

For example, artists may be able to identify:

- Which songs are trending fastest

- Which regions are reacting most positively

- Which creators are driving virality

- What type of content generates the most engagement

This level of precision marketing is becoming increasingly valuable in the streaming era.

The Role of AI in the New Agreement

Artificial intelligence remains one of the biggest talking points surrounding this partnership.

While the original dispute highlighted AI-related tensions, the new deal could signal a more collaborative approach toward managing AI-generated music content.

UMG has been aggressively pushing for stronger protections around artist likenesses, vocals, and copyrighted material.

Meanwhile, TikTok continues investing heavily in AI-powered content tools and recommendation systems.

Industry experts believe the new agreement may include behind-the-scenes safeguards involving:

- AI content labeling

- Copyright detection systems

- Voice cloning protections

- Licensing frameworks for AI-generated music

- Better monetization tracking

As AI technology evolves, partnerships between labels and tech platforms will become increasingly important in defining industry standards.

Why This Deal Matters for the Entire Music Industry

This isn’t just a business agreement between two corporations. It is a major signal about the future direction of the music industry.

The partnership demonstrates how deeply interconnected music labels and social platforms have become.

Streaming alone is no longer enough for artists to maintain long-term success. Social media engagement, creator partnerships, and viral marketing now play equally important roles.

The UMG-TikTok deal may influence future negotiations involving other major labels, such as:

- Sony Music Entertainment

- Warner Music Group

If the partnership proves successful, it could establish new standards for artist compensation, digital promotion, and AI governance across the entertainment industry.

How Artists Could Benefit From the Partnership

For musicians signed to UMG, the renewed agreement could open major opportunities.

Artists today depend heavily on visibility across platforms where younger audiences spend the most time. TikTok remains one of the dominant forces in youth culture and music consumption.

Potential artist benefits include:

Increased Reach

Artists can once again use TikTok’s viral ecosystem to promote new music globally.

Better Monetization

Expanded ecommerce tools could help musicians earn revenue beyond streaming royalties.

Enhanced Fan Relationships

Interactive features may allow deeper engagement between artists and communities.

More Strategic Promotions

Access to better analytics and campaign tools can improve release strategies.

Stronger Brand Collaborations

TikTok’s advertising infrastructure creates more opportunities for sponsorships and influencer campaigns.

TikTok’s Continued Influence on Global Music Culture

Even after years of debate surrounding social media’s impact on creativity, TikTok continues to shape modern music culture at an unprecedented level.

Songs often become popular on TikTok before they ever reach radio stations or streaming playlists.

Entire genres have experienced resurgence thanks to viral trends, including:

- UK garage

- Drum and bass

- Phonk

- Indie pop

- Afrobeat

- Hyperpop

- Classic catalog music

Older songs have also returned to the charts years after release because of viral TikTok moments.

For labels like UMG, maintaining a strong relationship with TikTok is becoming essential rather than optional.

Fans Will Likely Notice Immediate Changes

Music fans and creators will probably see the impact of the deal relatively quickly.

Users can expect:

- More official artist content

- New promotional campaigns

- Greater use of licensed music

- Enhanced livestream experiences

- More integrated shopping features

- Increased artist interaction

Creators who previously struggled with muted videos during the 2024 dispute may also welcome the return of full music availability.

The Future of Music Licensing and Social Media

The UMG and TikTok agreement highlights a larger transformation happening across the entertainment industry.

Music licensing is evolving beyond simple streaming rights into broader partnerships that combine:

- Social media marketing

- AI governance

- Ecommerce

- Fan communities

- Creator monetization

- Data analytics

- Digital advertising

Record labels are no longer just music distributors. They are becoming technology-driven media companies that rely heavily on platform partnerships.

At the same time, social platforms increasingly depend on music to maintain engagement and cultural relevance.

The relationship between music and technology has never been more interconnected.

Final Thoughts

The new multi-year licensing agreement between Universal Music Group and TikTok represents one of the most important music industry partnerships of 2026 so far.

After a highly publicized conflict that removed UMG’s catalog from TikTok in early 2024, both companies appear ready to move forward with a more collaborative and commercially ambitious relationship.

For artists, the agreement promises stronger promotional tools, improved e-commerce opportunities, and expanded audience engagement capabilities.

For TikTok, the partnership secures access to one of the world’s largest music catalogs while reinforcing the platform’s role as a dominant force in global music discovery.

And for the wider music industry, this deal could shape how labels, creators, and tech companies work together in the AI-driven future of entertainment.

As music consumption continues evolving through short-form content, creator culture, and social commerce, partnerships like this will likely define the next era of digital music marketing.

Spotify and Universal Music Group Launch Controversial AI Music Tools

Written by Sounds SpaceAI and Streaming Fraud Are Becoming Major Threats to the Music Industry

Written by Sounds SpaceAI and Streaming Fraud Remain Major Concerns for the Music Industry in 2026

The modern music industry is facing one of the biggest technological challenges in its history. As artificial intelligence continues transforming how music is created, distributed, and consumed, streaming platforms and record labels are now fighting a growing wave of streaming fraud, fake plays, bot manipulation, and AI-generated content flooding digital services.

Companies like Spotify, Apple Music, YouTube, and major record labels are reportedly investing heavily in advanced detection systems designed to identify fraudulent activity and protect legitimate artist royalties.

What was once a relatively simple streaming ecosystem has evolved into a highly complex digital battlefield where algorithms, bots, AI-generated songs, fake listeners, and automated engagement systems are creating serious problems across the music business.

For artists, labels, and streaming platforms alike, the stakes are enormous.

The Growing Problem of Streaming Fraud

Streaming fraud has become one of the music industry’s most urgent problems.

At its core, streaming fraud involves artificially inflating streaming numbers through unethical or illegal methods. This can include:

- Automated bots generating fake plays

- Click farms repeatedly stream songs

- Purchased playlist placements

- Artificial listener manipulation

- Fake accounts are inflating engagement metrics

- Organized streaming farms

The goal is usually financial gain, chart manipulation, algorithm boosting, or increased visibility on streaming platforms.

Because streaming revenue is directly tied to play counts, fraudulent streams can distort royalty payments and unfairly redirect money away from legitimate artists.

For years, industry insiders have warned that fake streams are quietly draining millions of dollars from the music ecosystem.

Now, with AI technology accelerating rapidly, the problem is becoming even more difficult to control.

How AI Is Changing the Music Industry

Artificial intelligence is transforming music creation faster than many experts predicted.

AI tools can now:

- Generate songs

- Clone voices

- Produce instrumentals

- Write lyrics

- Master tracks

- Create cover art

- Simulate artists

- Generate entire albums automatically

Some AI music tools are designed to help artists work more efficiently and creatively. However, others are being used to mass-produce low-quality content specifically designed to exploit streaming algorithms.

This creates a dangerous situation for streaming platforms.

Thousands of AI-generated tracks can now be uploaded quickly and cheaply, overwhelming recommendation systems and potentially diverting royalties away from real musicians.

Industry experts fear that without stronger protections, streaming platforms could become flooded with algorithmically generated “content spam.”

Why Fake Streams Hurt Real Artists

Many casual listeners underestimate how damaging streaming fraud can be.

Streaming revenue works through a shared royalty pool system. This means that fraudulent streams can effectively steal money from legitimate artists by taking a larger portion of available payouts.

For independent musicians already struggling to earn a sustainable income, this issue is especially serious.

When bots generate fake streams:

- Royalties become distorted

- Charts become unreliable

- Discovery algorithms become manipulated

- Real artists lose visibility

- Listener trust decreases

Smaller artists often suffer the most because they lack the financial power and industry influence needed to compete against artificially inflated numbers.

Meanwhile, some fraudulent operators profit by exploiting loopholes in streaming systems.

Spotify and Apple Music Fight Back

Streaming companies are becoming increasingly aggressive in their fight against fraud.

Spotify has reportedly expanded its fraud detection systems using machine learning and behavioral analysis tools capable of identifying suspicious activity patterns.

These systems monitor:

- Unusual streaming spikes

- Repetitive listening behavior

- Geographic inconsistencies

- Fake account activity

- Bot-driven engagement

- Abnormal playlist traffic

Similarly, Apple Music and other platforms are reportedly strengthening verification processes and internal monitoring systems designed to detect manipulation attempts before fraudulent streams impact payouts.

Record labels are also investing heavily in anti-fraud technology partnerships and analytics systems.

The goal is not just protecting revenue, but also maintaining trust in the streaming ecosystem itself.

The Rise of AI-Generated Music Spam

One of the music industry’s newest fears is the rise of AI-generated “music spam.”

This refers to massive amounts of automatically generated songs uploaded primarily to exploit algorithms rather than provide meaningful artistic value.

Some operators reportedly use AI tools to create:

- Ambient tracks

- Lo-fi music

- Sleep sounds

- Fake instrumental albums

- Generic mood playlists

- AI-generated vocals

These tracks are often uploaded at massive scales across streaming services.

Because AI dramatically reduces production costs, bad actors can generate hundreds or thousands of tracks quickly. Even if each track earns only small amounts of streaming revenue, the combined scale can create significant profits.

Industry executives worry that this trend could:

- Flood recommendation systems

- Reduce visibility for human artists

- Lower content quality overall

- Damage listener trust

- Create oversaturation problems

As AI tools improve, distinguishing human-created music from machine-generated content may become increasingly difficult.

The Debate Around AI Music

Not all AI-generated music is viewed negatively.

Many artists and producers are embracing AI as a creative tool rather than a replacement for human artistry.

AI can help musicians:

- Brainstorm ideas

- Speed up workflows

- Enhance production quality

- Generate creative inspiration

- Improve mastering

- Create demos faster

Some producers compare AI tools to previous technological revolutions like synthesizers, digital audio workstations, or autotune.

However, the biggest concerns arise when AI is used deceptively or exploitatively.

Problems emerge when:

- AI clones artist voices without permission

- Fake songs impersonate real musicians

- AI content floods streaming services

- Fraudulent uploads manipulate royalties

- Bots artificially inflate AI-generated tracks

The debate is no longer simply about technology. It is about ethics, ownership, transparency, and fairness.

Voice Cloning Raises Serious Questions

One of the most controversial developments in AI music involves voice cloning technology.

AI systems can now recreate artist voices with remarkable realism. This has already led to viral fake songs that imitate famous musicians without their consent.

Several AI-generated tracks using cloned celebrity voices have already spread across the internet, generating millions of plays before being removed.

This creates enormous legal and ethical concerns.

Artists are increasingly worried about:

- Unauthorized voice replication

- Identity theft

- Copyright violations

- Brand damage

- Lost income

- Fake collaborations

Record labels are pushing for stronger regulations surrounding voice likeness rights and AI-generated impersonations.

As technology improves, these concerns are expected to grow dramatically.

Why Streaming Platforms Face a Difficult Challenge

Streaming companies are in an extremely difficult position.

On one hand, platforms want to encourage innovation and allow artists to experiment with AI creatively. On the other hand, they must prevent abuse, fraud, and manipulation.

This balancing act is becoming increasingly complex because AI-generated content is not always easy to identify.

Unlike obvious spam or fake accounts, advanced AI music can sometimes sound surprisingly convincing.

Platforms must now determine:

- What qualifies as authentic artistry

- How AI-generated music should be labeled

- Whether AI tracks deserve royalties

- How to detect fraudulent uploads

- How to protect human creators

These questions are shaping the future of digital music distribution.

The Financial Stakes Are Massive

The streaming economy is worth billions of dollars annually.

Music streaming now dominates global music consumption, making royalty systems critically important for artists, labels, publishers, and platforms alike.

Any large-scale fraud or manipulation threatens the financial stability of the industry.

Industry analysts believe fake streaming operations may already account for substantial percentages of total platform activity in certain genres or regions.

If left unchecked, streaming fraud could:

- Reduce advertiser trust

- Harm subscription growth

- Damage chart credibility

- Undermine artist's confidence

- Create unfair market conditions

This is why streaming platforms are investing heavily in detection systems, moderation teams, and AI-based fraud prevention technologies.

Independent Artists Face the Biggest Risks

Independent musicians are particularly vulnerable in this evolving landscape.

Unlike major labels with large marketing budgets and direct platform relationships, independent artists often rely entirely on algorithmic discovery to reach audiences.

If algorithms become flooded with fake engagement and AI-generated spam, smaller artists may struggle even more to gain visibility.

Many independent creators already feel frustrated by:

- Low royalty payouts

- Oversaturated platforms

- Playlist gatekeeping

- Discovery challenges

- Fake engagement competition

The rise of AI-generated music and streaming fraud adds another obstacle to an already difficult industry environment.

Governments and Regulators May Step In

As AI music technology continues evolving, governments and legal authorities may eventually introduce stricter regulations.

Possible future regulations could include:

- Mandatory AI labeling

- Voice likeness protections

- Stronger copyright enforcement

- Anti-bot legislation

- Streaming transparency requirements

- Platform accountability rules

Some industry experts believe regulation is inevitable as AI-generated content becomes increasingly widespread.

However, implementing global standards may prove difficult because streaming platforms operate internationally across different legal systems.

The Future of Music in the AI Era

Despite growing concerns, many experts believe AI itself is not the enemy.

The real issue is how the technology is used.

AI has the potential to become an incredible creative tool that empowers artists, enhances production, and expands musical possibilities. But without ethical safeguards and strong anti-fraud protections, the technology could also create serious economic and artistic problems.

The music industry is now entering a critical transitional period where platforms, labels, artists, and lawmakers must work together to establish new standards.

The outcome could shape the future of music for decades.

Final Thoughts

The battle against streaming fraud and AI-generated manipulation has become one of the defining challenges of the modern music industry.

As platforms like Spotify, Apple Music, and YouTube continue investing in advanced detection systems, the industry is attempting to protect artist royalties, maintain listener trust, and preserve the integrity of streaming itself.

At the same time, artificial intelligence continues reshaping music creation at an astonishing pace.

The challenge moving forward will be finding the right balance between innovation and protection.

AI is already changing music forever. The question now is whether the industry can adapt quickly enough to prevent fraud, protect artists, and maintain fairness in an increasingly automated digital landscape.

More...

Kanye West India Concert Reportedly Canceled Over Safety Concerns

Written by Sounds SpaceKanye West Concert in India Reportedly Canceled Amid Safety Concerns

The global music industry is once again turning its attention toward Kanye West after reports surfaced claiming that his highly anticipated concert in India has reportedly been canceled over safety concerns. While official details remain limited, the news has already sparked widespread discussion online, with fans, industry insiders, and media outlets debating what exactly led to the reported cancellation.

For years, Kanye West — also known as Ye — has remained one of the most controversial, unpredictable, and influential figures in modern music culture. From groundbreaking albums and billion-dollar fashion ventures to headline-making controversies and public disputes, Kanye has consistently operated at the center of global attention.

Now, the reported cancellation of his India performance adds another unexpected chapter to his already turbulent public career.

The Reported India Concert Cancellation

According to emerging reports circulating across entertainment media and industry sources, Kanye West’s planned live performance in India was allegedly canceled due to safety-related concerns connected to event logistics, crowd management, and broader security considerations.